ep. 61. Expanding the Frame: Designing for Systems, Not Just Users

7 min read

One of my biggest design takeaways from 2024 was that systems thinking is non-negotiable.

What is systems thinking, why does it matter in design, and how can we apply it? In today’s episode, I explore these questions and introduce the Iceberg Model, a powerful tool for uncovering the deeper structures and mental models that shape surface-level events. By identifying root causes, we can move beyond reactive problem-solving and design for long-term impact.

What is Systems Thinking?

Systems thinking is a mindset and practice that examines how different parts interact and influence each other to form a larger whole. To illustrate, let’s consider the story of the Seven Blind Mice:

The mice encounter a mysterious "Something" near their pond. Each mouse investigates it on a different day, identifying only part of the whole - like a pillar (leg), a snake (trunk), or a fan (ear) - based on what they touch. Finally, the seventh mouse explores the entire "Something" and discovers it’s an elephant.

The lesson? Expand our frame to understand how individual parts come together to form the bigger picture. This is the essence of systems thinking.

Why Systems Thinking for Design? Why Now?

“Who is the end user?” “What problem are we trying to solve for them?”

If you work in tech, you’ve probably been asked or asked these questions. They’re not inherently bad questions. Asking them means you’re considering the humans for whom you’re creating technology, and you’ve got a clear focus on whom you’re building for. That’s great!

Where I get concerned is when we only ask those questions when scoping problem space, not considering what’s beyond the frame of that end user.

Why does it matter what’s out of frame? Because the technology we create generates ripple effects that resonate across large-scale systems, far greater than the end user. Looking at “fan” rather than “elephant” leads to unintended consequences to society and the environment, and limits innovation to surface-level fixes.

Now, technology has always affected systems beyond its first-order purpose (think wheels, steam engines, lasers). However, the scale and complexity of our design challenges has only increased: technology’s ripple effects on large-scale systems are amplified in our interconnected and interdependent world, where advanced capabilities are increasingly offloaded to AI.

How Systems Thinking Can Help

Systems thinking practice helps us navigate complex problem spaces and create long-term impact on a broad scale. A key tool in this process is systems mapping -a method for visualizing how different elements interact within a larger system.

There are many ways to map a system, from causal loop diagrams to behavior over time graphs, but the principles remain the same: identify key components, map their relationships, and analyze how they shape outcomes. This process reveals hidden patterns, uncovers leverage points for change, and informs more strategic, sustainable decisions.

The Iceberg Model

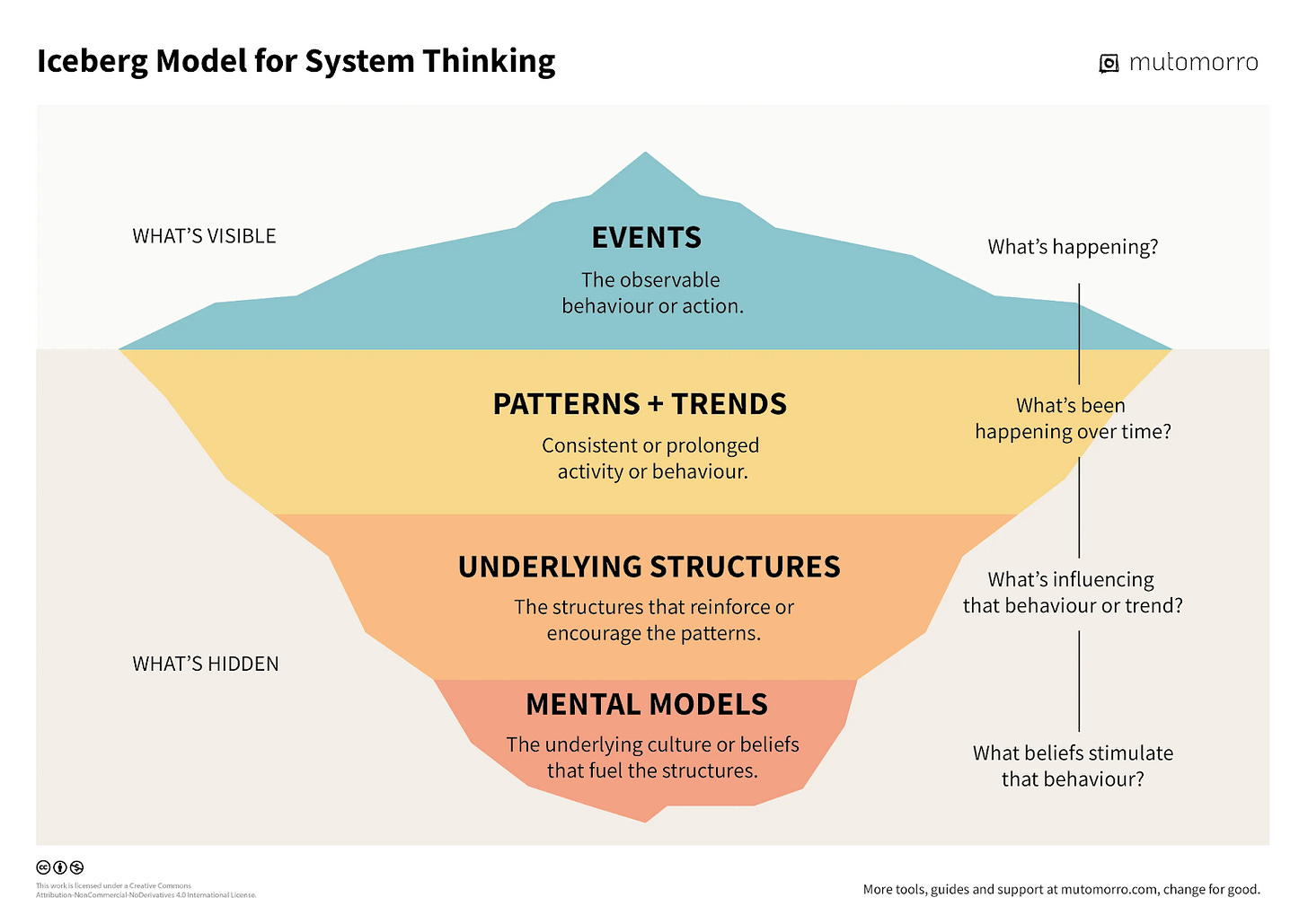

The Iceberg Model helps reveal the hidden forces shaping visible events, making it a powerful entry point into systems thinking. Its versatility (applicable to everything from customer behavior to global trends) and approachability (no complex terminology) make it an ideal tool for getting started with systems thinking. Let’s break it down.

Events are surface-level occurrences - observable behaviors or actions. Examples include a supply chain disruption due to extreme weather or a stock market drop after a major announcement. While highly visible, events are symptoms of deeper system dynamics.

Patterns and trends emerge beneath the surface - recurring behaviors over time, such as seasonal cycles or the shift to remote work. These patterns don’t happen randomly; they are shaped by underlying structures that reinforce them. Identifying patterns requires research and long-term observation.

One level deeper, we find structures - the systems, rules, and incentives that create patterns. Examples include power dynamics or financial incentives that shape decision-making and reinforce behaviors within a system.

At the deepest level, we reach mental models - the beliefs, assumptions, and values that shape how people perceive and engage with the system. These can include ideas like "innovation only happens in startups" or "seniority equates to expertise." Mental models are often unspoken, yet they exert a profound influence on how systems function and evolve.

Applying the Iceberg Model

Let’s try an activity I led with my Designing Future Systems students in our first class last week, demonstrating how the Iceberg Model can help look past surface-level events to identify root causes and assumptions.

We’ll walk through a news article, analyzing the events, and the patterns, structures, and mental models that underlie them. Today, we’ll walk through Reuter’s DeepSeek sparks AI stock selloff; Nvidia posts record market-cap loss, published January 27, 2025.

Events: What were the observable behaviors or actions?

Chinese AI startup DeepSeek launched DeepSeek-V3, a highly efficient, low-cost AI model.

Global investors reacted by selling off tech stocks, fearing its impact on AI incumbents.

Nvidia lost a record $593 billion in market value, dragging down the Nasdaq and semiconductor sector.

Patterns & Trends: What larger patterns do these events reflect?

US-China AI Race: The ongoing competition continues to intensify.

Open-Source AI Disruption: DeepSeek highlights the performance strides open-source AI has made, challenging the dominance of proprietary AI models.

AI Stock Volatility: Market swings fueled by hype and uncertainty about the returns on AI spending.

Structures: What structures reinforce or encourage these patterns and trends?

US-China Trade Policies: U.S. export restrictions on AI chip restrictions (e.g., Nvidia's high-end GPUs) aimed to curb China’s AI development, yet these policies push China to accelerate domestic alternatives.

Venture Capital & AI Investment Models: VCs have perceived open-source AI as riskier than proprietary models, as the latter have a clear path to monetization. DeepSeek-V3 threatens traditional investment models based on closed, high-cost AI, driving investor uncertainty.

Algorithmic Trading & Financial Market Mechanisms: Automated trading amplifies volatility, while AI-heavy stocks dominate market cap and index funds, causing broad market ripples.

Mental Models: What underlying assumptions underlie the entire system?

“AI Requires Massive Compute Resources”: DeepSeek challenges the prevailing belief that only companies with billions in computing power can develop top AI models.

“The US Will Always Lead in AI”: DeepSeek’s success shakes the US’s assumption that it will maintain AI dominance.

“Tech Restrictions Slow Down Competitors”: The belief that restricting chip exports would stall China’s AI progress is being challenged, as Chinese firms adapt and develop alternative solutions.

Understanding the deeper layers of a system help us move beyond reactive decision-making to a proactive approach, as these layers move at slower timescales than events (more on pace layers in a future episode).

Takeways

Technology Shapes Systems, Not Just “Users”

Design decisions ripple far beyond end users, shaping society, industry, and the environment. A systems approach enables more responsible, scalable, and lasting innovation.

Short-Term Fixes Undermine Long-Term Resilience

Focusing solely on end users without considering the broader system can create unintended consequences, reinforcing fragility rather than solving root issues.

Systems Thinking Strengthens Strategy

A systems approach helps us uncover the hidden forces behind behavior and actions, helping teams anticipate change and make stronger strategic decisions.

Human-Computer Interaction News

Can AI Learn Language through Real-world Interaction like Humans? A study from Vrije Universiteit Brussel argues that today's LLMs miss key aspects of human learning, such as context, intent, and social cues, leading to data inefficiency, limited reasoning, and biases. Researchers propose an AI model that learns language through interactive, real-world communication, mirroring human development.

AI Agents Accurately Simulate Human Behavior: Stanford researchers applied LLMs to qualitative interviews to simulate the behaviors and attitudes of 1,052 people with high accuracy. These agents offer a potential new approach to studying human behavior in policymaking and social science.

37% of HR Leaders Prefer AI Over Recent Grads: A Hult Business School survey finds that 89% of companies avoid hiring recent college grads, citing a lack of workforce readiness. Employers prioritize communication, curiosity, and collaboration, and highlight gaps in growth mindset, ethics, and self-awareness.

Designing emerging technology products? Sendfull can help you find product-market fit. Reach out at hello@sendfull.com

That’s a wrap 🌯 . More human-computer interaction news from Sendfull in two weeks!