ep. 92. How a Design-Leader-Turned-Founder Decides When to Hire AI

10 min read

It’s 1997.

Matt Hanson is sitting in a computer lab at the University of Buffalo, three chapters deep into a book about 3D shaders, trying to make a digital surface look like wood.

“All I want to do is tell this computer ‘wood.’ How does it not know? All this science and math, understanding the light properties of real-world objects, it was computationally possible—but I have to build it from stale code in books? When we can just tell it “Walnut”? What will we make then?”

That moment had been building since age 12, when Matt received two gifts in the same year: art lessons and a computer. By college he was studying motion graphics, design, 3D modeling and animation.

Almost thirty years later, that curiosity at the intersection of art and technology has guided Matt’s career.

He cut his teeth in motion design and visual effects at studios like Buck and Psyop, starting as a compositor and broadening into more of the production pipeline. By 2015, he was directing architectural-scale installations and mixed reality screen content at Viacom, before mixed reality was cool. Then came eight years at Meta leading design teams: scaling Spark AR into a global creator platform, shaping mixed reality features for Quest 3 and the Orion AR glasses, and collaborating with research scientists on embodied AI.

Today Matt is a solo founder, building with the tools his college-kid self in the Buffalo computer lab was waiting for.

Getting Back to Making

After Meta, Matt took a VP of Design role at an entertainment company, just as Claude Code and Cursor were arriving on the scene. One weekend, he vibe coded a site to give away pieces from his pottery practice to colleagues. They could browse, pick one, and drop in a shipping address.

While the project was small, the realization wasn’t:

“After managing people and teams for years, I had this power of making a thing again, and it started to build up. I was always a maker before. I just missed making stuff.”

Building with AI felt like working with a capable team but faster.

“Almost every discipline I’d normally hire for, I can now bootstrap with AI. It’s hat consolidation.”

Importantly, hat consolidation only counts if you don’t lower the bar on any of the hats. That standard would shape everything that came next.

Up in the Stack

Matt approached the post-Meta period the way he’d approached his CG career: keep moving up the pipeline.

He’d started as a compositor and spent years broadening into modeling, rendering, creative and VFX direction, and then design leadership. Each step put more of the production stack under his own hands. AI was the same move, but compressed.

“Every experiment was an exercise in what I can do as well as a professional.”

The goal isn’t to be that professional, but to match the quality that professional would produce.

In his VP of Design role, Matt was prototyping at a pace he would have been thrilled to see from his prototypers back at Meta. Solo, in his own org, at staff velocity. He built a number of experiences, from a small icebreaker game to experiences across domains like talent representation, real estate, and investment management.

Then came two parallel bets: an email app and a recipe app.

The email app died on a two-part test. Too many dependencies for one person to hold reliably, and a trust ceiling that demanded near-perfect execution:

“I didn’t want to mess with people’s email because that’s important. It’s like a telephone. You start to bend how people are communicating in a way they don’t expect—and there are a lot of ways it could go wrong, many with consequences I didn’t want to risk.”

A bad recipe means a bad dinner. A missed email could mean a missed mortgage payment. Cooking had a manageable dependency surface and a forgiving trust ceiling.

Matt went all in.

A Recipe for Trust

At first, Matt was building features for his own use, mostly to address his aversion to “cooking math”: aggregating ingredients and calculating proportions across recipes so you can shop for them all at once. Useful, but not a differentiator.

That got him thinking about what actually mattered to him and his family, and what they weren’t getting.

Some important context: Matt comes from a family that Cooks, with a capital “C”.

His sister runs her own cooking schools.

His mother and grandmother left behind handwritten recipes, some more than a hundred years old. The paper is fragile; the ink, fading. Steps are sometimes missing, use antiquated ingredients like “1 cup of fat” or have been smudged into illegibility. Cooking from those recipes today is a challenge.

Filling in those gaps is where AI earned its place. Heirloom can interpret a damaged or incomplete recipe, inferring a missing step or clarifying a smudged measurement, without overwriting what’s actually on the page.

Even at this lowest-stakes end of the trust spectrum, the bar is non-trivial:

A hundred-year-old recipe with a missing step needs the right step inferred, not merely a plausible one. Matt built a variety of custom AI functions which ensure recipes are complete and cookable. That trust floor had to be cleared before anything else mattered.

Then he showed the app to his daughter, and the picture got bigger: “She wanted to upload videos from social media. That’s how she cooks.”

A 25-second vertical video is nearly impossible to cook from (exhibit A: these viral recipes). There’s no readable list of ingredients, no clean sequence of steps. But it’s how an entire generation now learns to cook.

Neither generation was getting what they needed: the older one had recipes that were physically failing; the younger one had recipes locked inside a format you can’t actually cook from.

“There’s a preservation gap where you have an analog generation and a digital generation. There’s really no bridge.”

Heirloom is that bridge.

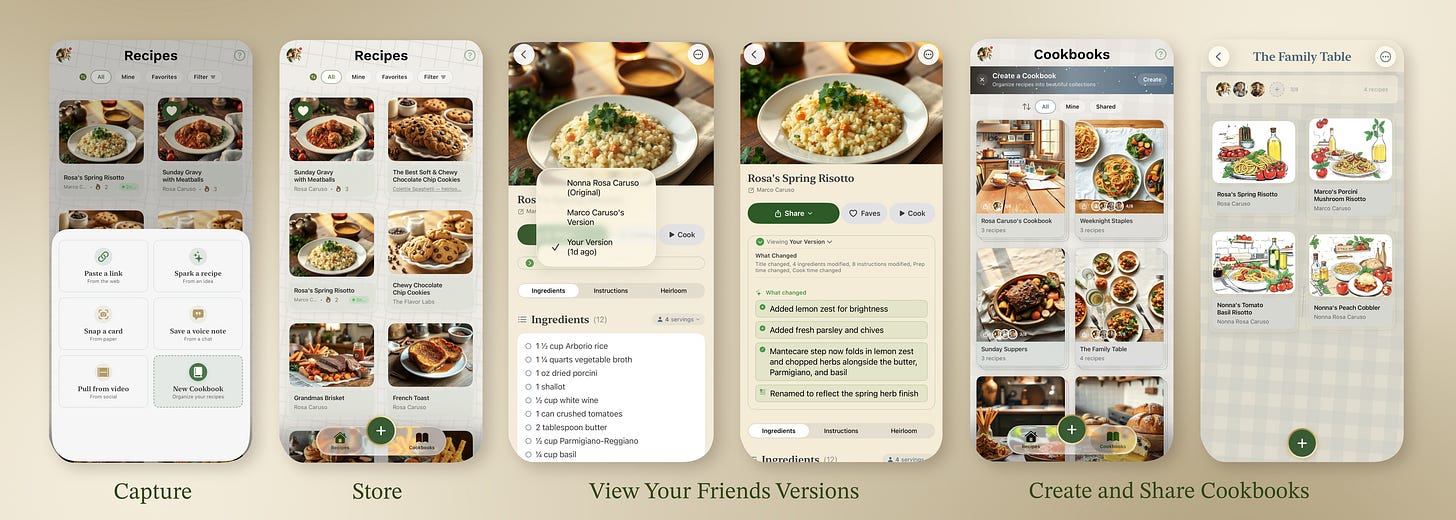

Older family members can digitize physical recipes or share URLs. Younger ones can rescue recipes from vertical video. Either can use web import or generative recipes for free. Whatever comes in, the app converts it into a consistent recipe you can use, with the option to print a physical cookbook.

Jobs to be Done

Matt, like me, is a fan of the Jobs to be Done (JTBD) framework.

JTBD: the underlying need a user is trying to satisfy, and why they’ll “hire” your product to meet it. If my JTBD is to quickly get from point A to point B in Oakland, I can hire a Lyft, my own car, an e-bike, or a scooter to get there.

For Matt, JTBD is especially useful when building with AI, because it surfaces the right question first.

“JTBD is really good to help understand whether I’m hiring AI in the right way in a given situation.”

He applies it three ways:

Mapping stakes: JTBD helped him determine whether a job was too high-consequence for AI’s current capabilities. This analysis killed the email app and routed him to Heirloom.

Defining the core loop: By focusing on what users were trying to accomplish, he cut several features and streamlined Heirloom down to capture, organize, share, with the family cookbook as the artifact at the end. This discipline matters with AI, where cheap, fast development makes it easy to drift from the core problem.

Researching unfamiliar domains: When Matt consults across unfamiliar domains, he uses AI to build a mental model of the problem space quickly, then applies JTBD to find the parts where AI can help. Most industries already know their “why.” JTBD gives everyone a shared vocabulary for talking about where AI fits and where it doesn’t.

“There are all these AI things getting put into products. I don’t think the people building them understand the trust level needed for people to hire AI in a given context and be successful enough to come back.”

Quality as Discipline

Matt’s original codebase ran on a laptop. Builds would lock up the machine for fifteen to twenty minutes at a time. He knew it wouldn’t survive a code review.

He upgraded to a Mac Studio and used the migration as a catalyst to refactor the entire project into a modular Swift Package Manager architecture—the kind of structural decision he’d want a senior engineer to make.

“I’m not going to bring somebody in as a partner and give them slop.”

The same discipline runs through every choice.

“I want durable infrastructure, resilient decisions. I don’t want to put a wrapper around my iOS codebase and ship that to Android. I’ll do something native so the craft is there, and the confidence in iOS and Android as tailwinds. I’ll always try to balance quality decisions even with greater overhead.”

This ties back to hat consolidation: you can wear many hats with AI as long as you’re not lowering the quality bar on any one hat.

“Almost every discipline I’d normally hire for, I can now bootstrap with AI. It’s hat consolidation.”

A World Without a Reward Function

Matt believes today’s AI has a structural gap that no amount of additional flat data will close.

“Today’s AI is in a cave. It’s all flat. It’s all trained on images, text, audio, and video. None of it has depth. None of it understands real-world consequences.”

The critique is deeper than ‘models can’t see in 3D’ (though that’s part of it): it’s that agents lack a reward function tied to physical reality.

Drop a ceramic plate and it shatters. Knead dough too long and it toughens. A model can describe those consequences and even simulate them, but it never has to pay the cost or reap the reward of an action.

This is a throwback to the limitations Matt faced in 1997: the machine couldn’t render wood because it didn’t understand light. Today, it can render anything, but it still doesn’t understand consequence.

The cave got more sophisticated, but it’s still a cave.

“Today’s AI is in a cave. It’s all flat. It’s all trained on images, text, audio, and video. None of it has depth. None of it understands real-world consequences.”

The AI Hype Check

I ask every practitioner I interview the same question: is AI overhyped or underhyped?

Matt’s answer: both, depending on where you’re looking.

“It’s underhyped where it is making an impact, and it’s overhyped where it’s not. The garbage is predictably cheap, everywhere, overhyped and overexposed.”

He’s building Heirloom as a deliberate counter-example, and is clear-eyed about why it matters.

“I care a lot about being an example of what you can do with AI if you mostly know what you’re doing, understand what customers want, and care about quality.”

The AI conversation keeps getting flattened into pure enthusiasm or pure backlash, when both are true simultaneously and neither is the interesting part. Matt hopes users can see value in AI before the current volume of grift poisons the well entirely.

“Slop got added to the dictionary last year. It’s on the record now, and that’s not good. We need the rising tide to lift all ships.”

This interview has been edited for length and clarity. Opinions expressed are solely Matt’s own.

Four Things to Try This Week

First, give Heirloom a try: capture your favorites, create a cookbook, then share it with your friends and family.

Then, try on these takeaways from Matt’s playbook:

JTBD as a feature filter. Identify the job the user is hiring your feature to perform. For each JTBD, ask whether AI is the best path to completing the job. If a feature doesn’t meaningfully shorten the distance between intent and outcome, cut it.

Determine the trust level first. Before speccing any AI feature, write down the worst-case outcome. Bad recipe? Missed mortgage payment? Design to that specific reliability bar. If the technology can’t currently meet it, find a problem space where the stakes allow for more flexibility.

Consolidation, not compromise. Use AI to collapse the distance between disciplines without lowering your quality bar. Hat consolidation is a force multiplier, not an excuse for slop.

Climb your own pipeline. Whatever your craft origin—design, engineering, writing, ops—there’s a stack above and below you that AI now makes accessible. Pick a rung up, hold the quality bar, and ship.

Know a practitioner navigating AI in their work who should be featured here? Reach out to hello@sendfull.com

⏪ Recent Episodes

ep. 91. How a Maker-Turned-Product-Leader Keeps the Human in the Frame with AI

ep. 90. How a Leading MarTech Program Manager Uses AI to Track What Still Matters

ep. 89. Cognitive Offloading to AI: The Peril and the Promise

If you like these episodes, you’ll love my book. Designing Automated Futures is coming this year with Rosenfeld Media. 📣 Sign up to be the first to know about new book releases, sales, and events.

🚀 Sendfull in the Wild

School’s Out

This semester, I designed and taught UX for AI, a new graduate course at UC Berkeley School of Information. We explored the questions of if, when, and how to use AI in product design through hands-on building, interdisciplinary conversation grounded in HCI and human factors research, and reflection.

Students designed both a new feature for an existing product and a 0-to-1 concept, grounded in evidence about user needs and the market landscape. Trust calibration, designing for situational awareness, task allocation, and defining clear AI evals were key aspects of students’ work. We vibe coded with Claude Code, Figma Make, and Lovable.

The course wrapped last week. I can’t wait to see what the Class of 2026 builds next!

Rosenfeld Media Meet & Greet with UX Authors

On April 30th, I joined fellow authors Chris Noessel, Josh Clark, Veronika Kindred, Indi Young, Andy Welfle, Jenae Cohn, and Harry Max in a panel discussion led by Lou Rosenfeld. The event was hosted in the DesignMap office in San Francisco, which set the tone for rich discussions on topics like the perils of cognitive offloading to AI and the future of design education.

📖 Good Reads

2026 AI Index Report: Stanford’s annual AI state of the union. One striking finding: generative AI reached 53% population adoption within three years, faster than the PC or the internet.

From Clicks to Conversations: IEEE article by Lucy Manole, arguing that HCI is shifting from click-based, predefined navigation to conversational, intent-driven interaction. This requires designers to handle probabilistic outputs, build in transparency and reversibility, and treat AI as a collaborator rather than a tool.

You’re Not Asking the AI. You’re Activating It: The Strategic Linguist applied frame theory to AI interaction, showing that the words you choose (asking, delegating, conversing) activate different registers in the model and shape both its output and your interpretation of it.

That’s a wrap 🌯 . More on UX, HCI, and strategy from Sendfull in two weeks.

Stef — this is so good thank you so much Hat consolidation, trust ceiling/floor, the "cave" — every framing here is going to continue to live in my head.